In every agency and brand Khairul Helmi, regional director of programmatic for APAC at Assembly, has worked with, brand safety was the top priority. But now the risk isn't just about showing up next to inappropriate content, it's about being placed next to content that is designed to mislead but looks real and spreads quickly.

"Deepfakes, synthetic misinformation, and low-quality 'slop sites' are being created faster than verification technology can keep up," says Helmi. "Even as we improve our tech stack with pre-bid filters, verification partners, and marketplace curation, the hard truth is that technology alone is no longer enough."

Most existing brand safety systems predate the AI boom. They reliably detect adult content, hate speech, obvious disinformation, fraud, and invalid traffic but falter against AI news farms peddling false narratives, political or cultural nuances in local languages, or misinformation echoed by AI answer engines and chatbots.

Several marketers are noticing a shift from humans using AI as a tool to AI agents planning, producing, and distributing content faster and on their own.

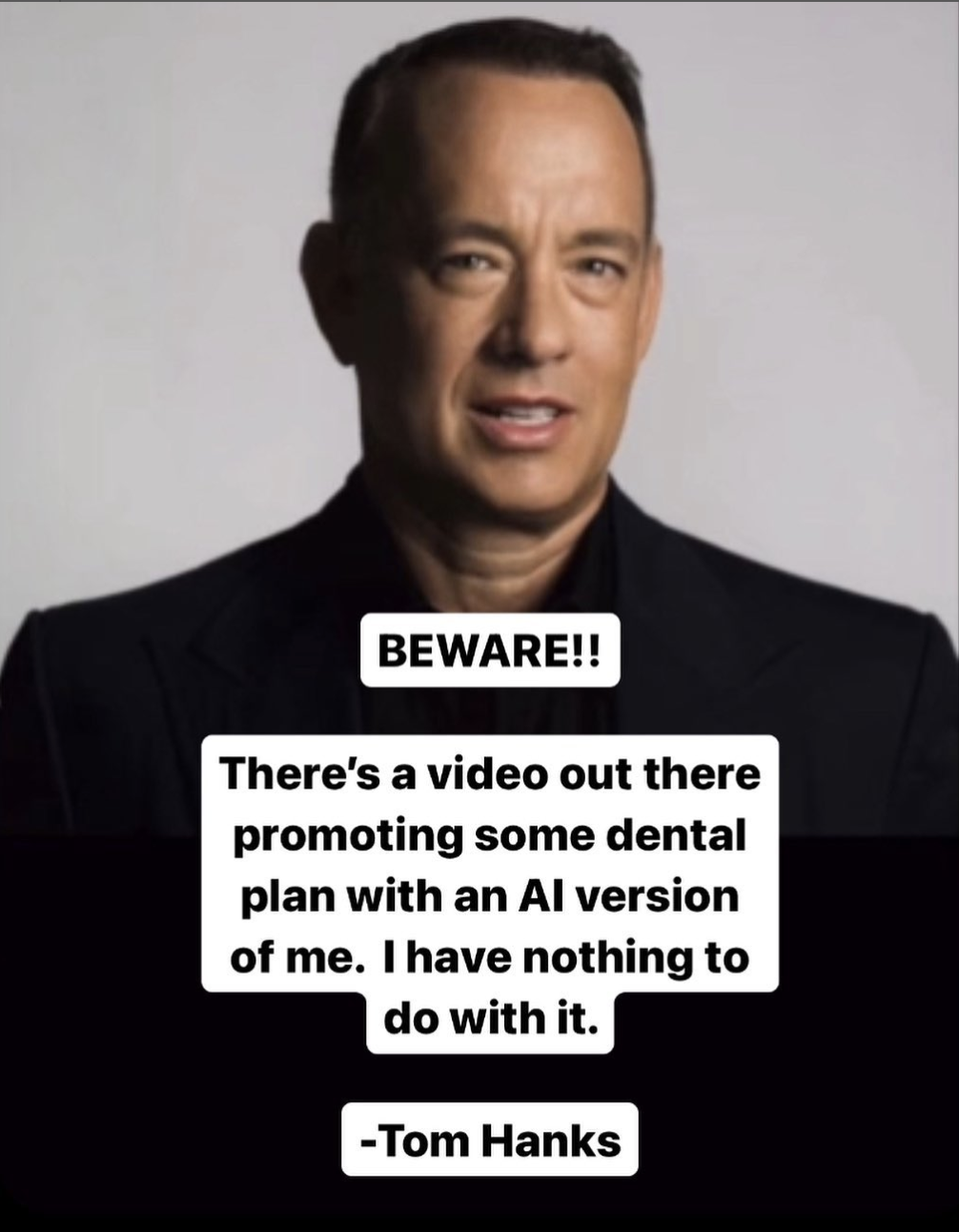

"Deepfakes and synthetic news misinform and make it hard to distinguish what’s real from what’s not," says Roshat Adnani, APAC MD at M+C Saatchi Performance. "And if your logo appears next to that sort of thing, even accidentally, people can read it as an endorsement."

The risk is real. AI generated content is accelerating at a pace we have never seen before with forecasts suggesting that up to 90 per cent of web content could be AI produced by 2026. And the concern is not just volume but the sophistication.

"We are entering a world where fake articles, imagery and even deepfake video can blend seamlessly into legitimate environments making it increasingly difficult to distinguish credible content from AI generated material," says Aaron Jansen, director of commercial and media operations, Bench Media. "For brands that have spent years building trust and equity in-market, even brief exposure alongside misinformation or MFA (made-for-advertising) environments can have a long lasting impact. Brand safety frameworks were never built for a world where content can be created and amplified at scale in minutes."

There has also been a rise in AI-driven sites built purely to farm programmatic budgets. And while there are existing tools and controls that help, they’re now up against a threat they weren’t originally designed for.

"These tools struggle to keep up with content that changes faster than they can, such as deepfakes, synthetic misinformation, or hybrid pages designed to bypass filters," says Helmi. "These gaps exist not because the technology is weak, but because new threats appear every day."

In APAC, this is amplified, given that the region has heavy social media use, fragmented media, and a myriad of diverse languages and market contexts, making it an easy breeding ground for AI misuse.

Transparency is key

To combat the increasing number of risks that AI-generated content poses, legacy media verification platforms like DoubleVerify have recently launched new tools like DV AI Verification to help advertisers identify and manage interactions with AI agents, including both declared bots like ChatGPT and undeclared, potentially malicious ones.

As part of its Agent ID Measurement offering, DV claims that it analyses nearly two billion interactions with both declared and undeclared AI agents – activity assessed through a deep intelligence layer built to enhance transparency, accountability and performance.

"Transparency is the cornerstone of trust in digital advertising, and it's even more vital as AI changes how content is created, consumed and interacted with," says Gilit Saporta, VP product, fraud prevention, DoubleVerify. "Advertisers need to know who or what is engaging with their media to measure performance accurately and maintain accountability."

DV’s Agent ID Measurement aims to provide this clarity. Advertisers can see which AI systems are interacting with their ads, whether the activity is declared or hidden and what impact it has on media quality. Similarly, DV’s AI SlopStopper helps brands enable post-bid monitoring and pre-bid protection, ensuring that their ads do not deliver to low-quality content environments.

However, some critics remain sceptical of the effectiveness of media verification tools.

"Today's brand safety relies on signals in the bid request, all of which can be falsified," says Dr Augustine Fou, founder of Fou Analytics. "Bad guys always falsify signals in the bid request. And today's brand safety looks for keywords in the URL, not on the page, so again they miss obvious bad content."

Fou says he has zero confidence in today's media verification and detection tools and points to research documenting their failures over the last 10 yrs.

"Advertisers don't need to pay for brand safety verification; they just need to create an inclusion list of good websites and apps to show their ads," adds Fou. "There are just far too many AI slop and fraudulent websites and apps to block, and there are infinite numbers of new ones that spring up to eat their budgets."

The risk is not AI itself; it’s the overconfidence around it

In any case, many media buyers agree that leaning too heavily on AI tools without keeping rigorous human checks in place is not effective.

"The volume of AI-generated content is exploding, and many publishers treat their models as if they are infallible," says Orange Line’s head of media, Alberto Sanchez. "This creates blind spots. When deepfakes, synthetic misinformation, or low-effort slop sites slip through, it is usually because someone assumed the machine would catch everything. The risk is not AI itself; it’s the overconfidence around it."

While the tech can help filter the noise, real people’s judgment is still needed. Today’s solutions are still relevant, especially for classification, but not for contextual understanding. They can flag explicit risks, but they often struggle with nuance, satire, fast-moving misinformation trends, or high-quality synthetic media.

"This struggle stems from their difficulty with cultural context, evolving diverse languages, and the lack of inherent 'common sense' reasoning that human oversight provides," says Zachary Lim, head of IPG Mediabrands Singapore. "Our agency doesn't solely rely on automated tools. As a process, we layer in robust human review and custom contextual analysis to provide an additional safety net for our clients' campaigns, especially for sensitive categories or high-profile placements."

Technology alone will not solve this

And while AI-powered verification tools may have made it easier to spot unsafe environments in real time, gaps and limitations remain. "One of the biggest gaps in brand safety today is not technology, but mindset," says Helmi. "Advertisers still hesitate to use stronger verification layers because they worry about CPM or CPA. This isn't because they don't know the risks; brands are aware, but often choose short-term savings over long-term reputation."

Another problem is that most safety measures only react after the fact. AI-generated media changes much faster than platform classifiers can keep up. By the time systems respond, bad actors have already moved on

To really protect brand reputation and ROI, Sanchez at Orange Line says marketers need three things. Better cross-platform transparency, tighter human review layers for high-risk placements, and clearer regulatory standards that define what counts as unacceptable AI-generated content.

We are now seeing highly convincing AI-generated content across social platforms, created to maximise engagement above all else. Technology alone will not solve this. Platforms need to couple investment in detection tools with dedicated human review teams who can continually train those systems and step in when automation falls short.

A similar challenge is increasingly evident across the open web, where some publishers are pushing misleading or fabricated content purely to drive revenue. At some point, regulation may need to intervene, particularly as the line between authentic and AI-generated content becomes harder to discern, and stronger accountability is required to protect both consumers and advertisers

"Technology alone cannot solve this. Human oversight is crucial and should be reintroduced at the points where brand risk is highest," says Sanchez. "Clear rules about what AI-generated media must disclose will also reduce grey areas that platforms currently do not handle well."

At the same time, technology is only one part of the solution.

"If brands continue to chase the cheapest impressions on the open exchange, they will always be exposed to low-quality AI-generated environments no matter how good verification becomes," says Jansen. "Prioritising curated supply paths, direct relationships with trusted publishers, and outcome-based measurement can significantly reduce risk even when detection is not perfect."

Source: Campaign Asia-Pacific